1 Answers

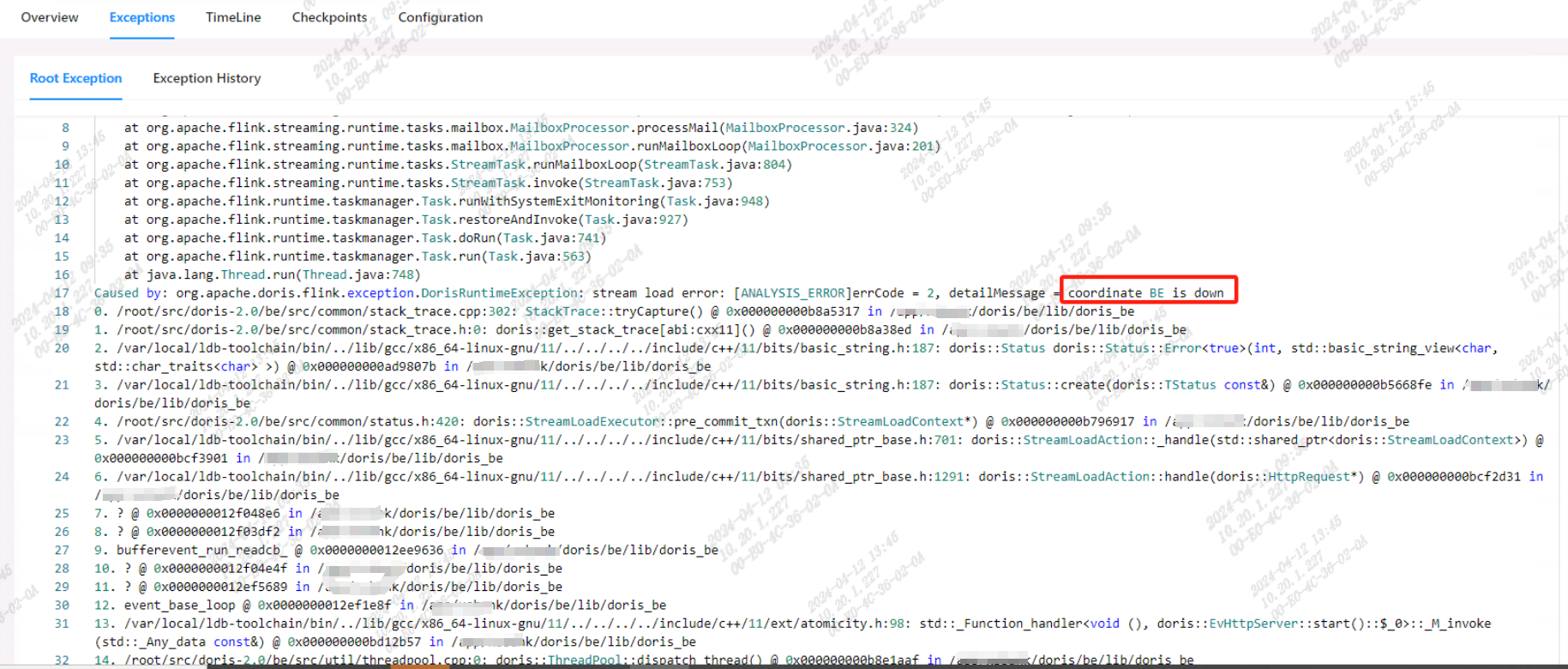

默认的心跳超时时间为5s, 心跳停止后,FE马上abort coordinate BE 的事务。实际上be没有down

然而BE事务在导入过程中并不需要fe的参与,这个5s太敏感了,建议改成超过1分钟没心跳才abort coordinate BE 的事务。

Related Questions

flink消费kafka数据,streamload的方式写入doris,如果出现脏数据错误

1 answers

doris3.1.4使用Doris Kafka Connector消费kafka报错

1 answers

使用Routine Load 消费kafka的debezium-json数据,要如何写Routine Load

1 answers

doris 数据与服务迁移,希望提供多种迁移方案

1 answers

Spark Doris Connector 从hive导入doris double类型丢精度怎么解决?

1 answers

4.0.3 mysql 通过CREATE JOB 同步数据之后中文变成了?

2 answers