doris版本:3.1.2

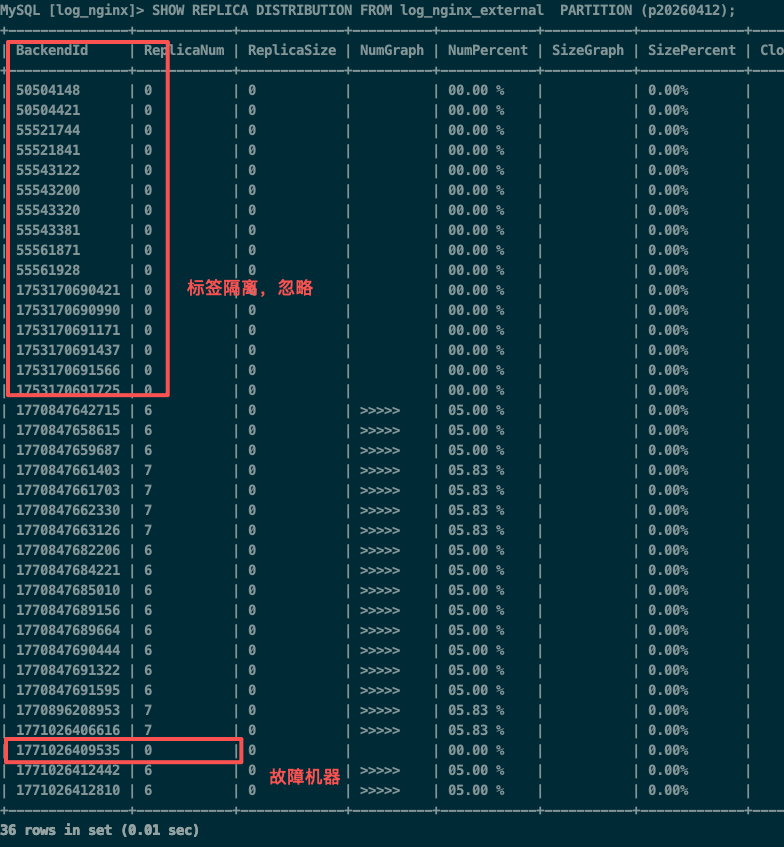

一台be节点磁盘故障后,更换磁盘重新加回集群,tablet无法迁移回来(清理了be_custom.conf),把这个集群下线,重新添加回来依然不行,只有新创建的分区tablet才会分配到这个节点,已经过去一周,问题依旧

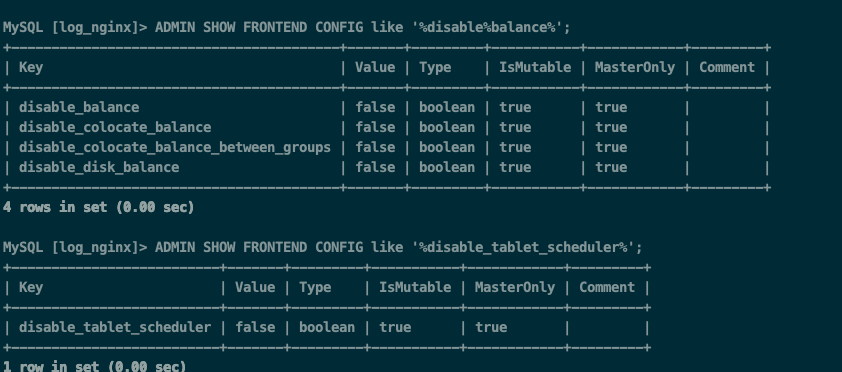

fe配置了均衡策略: tablet_rebalancer_type = partition

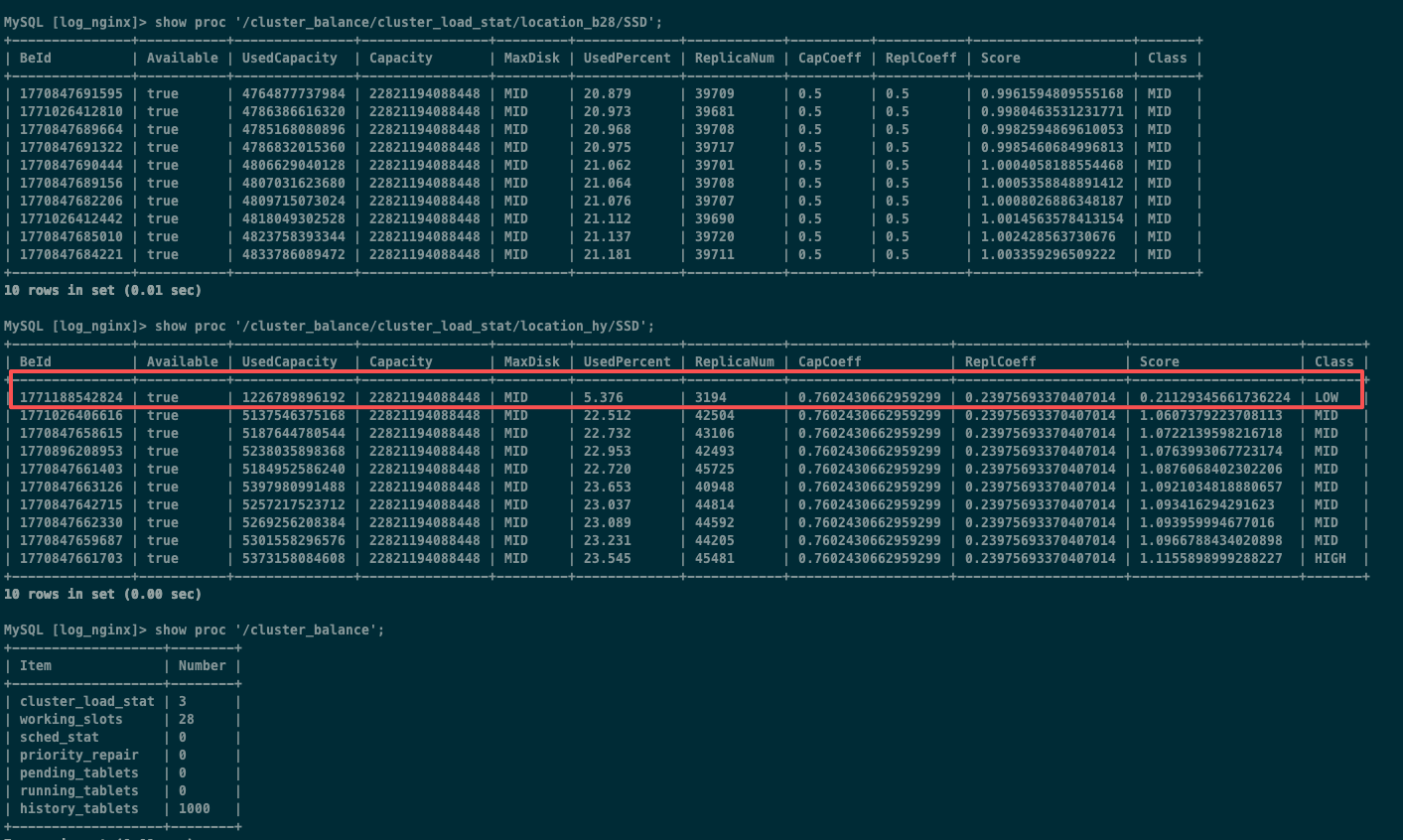

be节点设置了不同标签(b28,hy各10台),下面是两组机器详情

没有关闭均衡

已确认节点状态正常,trash没有数据,没有事物卡住,也尝试了重启fe

从fe日志看到持续存在一个问题,基于PartitionRebalancer创建了clone任务,按分区维度在副本少的节点创建了新的tablet,但是新克隆的tablet立刻会被冗余副本检查任务清理掉,不知道这个是否影响均衡

fe日志详情:

2026-04-17 17:02:26,250 INFO (thrift-server-pool-73|156) [TabletSchedCtx.finishCloneTask():1193] new replica [replicaId=1776397679836, BackendId=1770847642715, version=44978, dataSize=0, rowCount=25209013, lastFailedVersion=-1, lastSuccessVersion=44978, lastFailedTimestamp=-1, schemaHash=1962543711, state=CLONE, isBad=false] of tablet 1768474955969 set further repair watermark id 1420017752

2026-04-17 17:02:26,250 INFO (thrift-server-pool-73|156) [TabletSchedCtx.finishCloneTask():1235] clone finished: tablet id: 1768474955969, status: HEALTHY, state: FINISHED, type: BALANCE, balance: BE_BALANCE, priority: NORMAL, tablet size: 0, from backend: 1771026406616, src path hash: 8500455285475874782, to backend: 1770847642715, dest path hash: -8310306339795913933, visible version: 44978, committed version: 44978, replica [replicaId=1776397679836, BackendId=1770847642715, version=44978, dataSize=0, rowCount=25209013, lastFailedVersion=-1, lastSuccessVersion=44978, lastFailedTimestamp=-1, schemaHash=1962543711, state=NORMAL, isBad=false], replica old version -1, need further repair true, is catchup false

2026-04-17 17:02:26,250 INFO (thrift-server-pool-73|156) [TabletScheduler.removeTabletCtx():1768] remove the tablet tablet id: 1768474955969, status: HEALTHY, state: FINISHED, type: BALANCE, balance: BE_BALANCE, priority: NORMAL, tablet size: 0, from backend: 1771026406616, src path hash: 8500455285475874782, to backend: 1770847642715, dest path hash: -8310306339795913933, visible version: 44978, committed version: 44978. because: finished

2026-04-17 17:02:38,480 INFO (tablet checker|156) [TabletScheduler.addTablet():301] Add tablet to pending queue, tablet id: 1768474955969, status: NEED_FURTHER_REPAIR, state: PENDING, type: REPAIR, priority: HIGH, tablet size: 0, visible version: -1, committed version: -1

2026-04-17 17:02:40,151 INFO (tablet scheduler|156) [TabletSchedCtx.checkFurtherRepairFinish():868] replica [replicaId=1776397679836, BackendId=1770847642715, version=44978, dataSize=0, rowCount=25209013, lastFailedVersion=-1, lastSuccessVersion=44978, lastFailedTimestamp=-1, schemaHash=1962543711, state=NORMAL, isBad=false] of tablet 1768474955969 has catchup with further repair watermark id 1420017752

2026-04-17 17:02:40,152 INFO (tablet scheduler|156) [TabletScheduler.removeTabletCtx():1768] remove the tablet tablet id: 1768474955969, status: NEED_FURTHER_REPAIR, state: PENDING, type: REPAIR, priority: HIGH, tablet size: 0, visible version: 44978, committed version: 44978. err: further repair all catchup. because: further repair all catchup

2026-04-17 17:02:40,152 INFO (tablet scheduler|156) [TabletScheduler.addTablet():301] Add tablet to pending queue, tablet id: 1768474955969, status: REDUNDANT, state: PENDING, type: REPAIR, priority: VERY_HIGH, tablet size: 0, visible version: -1, committed version: -1

2026-04-17 17:02:45,234 INFO (tablet scheduler|156) [TabletScheduler.deleteReplicaInternal():1212] set replica 1776397679836 on backend 1770847642715 of tablet 1768474955969 state to DECOMMISSION due to reason high load backend

2026-04-17 17:02:45,234 INFO (tablet scheduler|156) [TabletScheduler.deleteReplicaInternal():1221] set decommission replica 1776397679836 on backend 1770847642715 of tablet 1768474955969 pre watermark txn id 1420019668

2026-04-17 17:02:50,121 INFO (tablet scheduler|156) [TabletScheduler.deleteReplicaInternal():1235] set decommission replica 1776397679836 on backend 1770847642715 of tablet 1768474955969 post watermark txn id 1420020462

2026-04-17 17:02:54,623 INFO (tablet scheduler|156) [TabletScheduler.deleteReplicaInternal():1275] delete replica. tablet id: 1768474955969, backend id: 1770847642715. reason: DECOMMISSION state, force: false

2026-04-17 17:02:54,623 INFO (tablet scheduler|156) [TabletScheduler.removeTabletCtx():1768] remove the tablet tablet id: 1768474955969, status: REDUNDANT, state: PENDING, type: REPAIR, priority: VERY_HIGH, tablet size: 0, visible version: 44978, committed version: 44978. err: redundant replica is deleted. because: redundant replica is deleted